Utilizing Apache Pulsar to Populate Apache Iceberg and Apache Parquet based Lakehouses

FLiP-Pi-Iceberg-Thermal

Apache Iceberg + Apache Pulsar + Thermal Sensor Data from a Raspberry Pi

Steps

- Run Apache Pulsar 2.10.2 (standalone, docker, baremetal cluster, VM cluster, K8 cluster, AWS Marketplace Pulsar, StreamNative Cloud)

- Run Apache Iceberg (docker, ...) 1.1.0

- Run Apache Spark 3.2

- Deploy Pulsar connector

- Send data to Pulsar topic

- Query Iceberg in Spark

Sensor Python App Sending messages

{'uuid': 'thrml_wse_20221216202136', 'ipaddress': '192.168.1.179', 'cputempf': 122, 'runtime': 0, 'host': 'thermal', 'hostname': 'thermal', 'macaddress': 'e4:5f:01:7c:3f:34', 'endtime': '1671222096.350368', 'te': '0.0005612373352050781', 'cpu': 5.5, 'diskusage': '101858.0 MB', 'memory': 9.9, 'rowid': '20221216202136_a0e9eae8-3b4f-4222-95c6-7657ba0e12e2', 'systemtime': '12/16/2022 15:21:41', 'ts': 1671222101, 'starttime': '12/16/2022 15:21:36', 'datetimestamp': '2022-12-16 20:21:40.012859+00:00', 'temperature': 30.5959, 'humidity': 26.07, 'co2': 767.0, 'totalvocppb': 0.0, 'equivalentco2ppm': 400.0, 'pressure': 99773.53, 'temperatureicp': 86.0}

Pulsar Sink Deploy

bin/pulsar-admin sink stop --name iceberg_sink --namespace default --tenant public

bin/pulsar-admin sinks delete --tenant public --namespace default --name iceberg_sink

bin/pulsar-admin sink create --sink-config-file conf/iceberg.json

Pulsar Sink Status

bin/pulsar-admin sinks status --tenant public --namespace default --name iceberg_sink

{

"numInstances" : 1,

"numRunning" : 1,

"instances" : [ {

"instanceId" : 0,

"status" : {

"running" : true,

"error" : "",

"numRestarts" : 0,

"numReadFromPulsar" : 10,

"numSystemExceptions" : 0,

"latestSystemExceptions" : [ ],

"numSinkExceptions" : 0,

"latestSinkExceptions" : [ ],

"numWrittenToSink" : 10,

"lastReceivedTime" : 1671220772536,

"workerId" : "c-standalone-fw-127.0.0.1-8080"

}

} ]

}

Iceberg data written via Pulsar Lakehouse Cloud Sink

ls -lt /Users/tspann/Downloads/iceberg/iceberg_sink_test/ice_sink_thermal

total 0

drwxr-xr-x 94 tspann staff 3008 Dec 16 15:45 metadata

drwxr-xr-x 34 tspann staff 1088 Dec 16 15:45 data

ice_sink_thermal/metadata

total 856

-rw-r--r-- 1 tspann staff 2 Dec 16 15:45 version-hint.text

-rw-r--r-- 1 tspann staff 19283 Dec 16 15:45 v15.metadata.json

-rw-r--r-- 1 tspann staff 4352 Dec 16 15:45 snap-8802315029762513718-1-78627844-0d69-4c2e-87db-016b9fdac119.avro

-rw-r--r-- 1 tspann staff 7536 Dec 16 15:45 78627844-0d69-4c2e-87db-016b9fdac119-m0.avro

-rw-r--r-- 1 tspann staff 18303 Dec 16 15:43 v14.metadata.json

-rw-r--r-- 1 tspann staff 4315 Dec 16 15:43 snap-1218246990201737819-1-1253a40d-fae5-4919-9d71-be51af402899.avro

iceberg_sink_test/ice_sink_thermal/data

total 360

-rw-r--r-- 1 tspann staff 9771 Dec 16 15:45 00000-1-951a37fc-5069-4201-94fa-4ef9975f6293-00014.parquet

-rw-r--r-- 1 tspann staff 9782 Dec 16 15:43 00000-1-951a37fc-5069-4201-94fa-4ef9975f6293-00013.parquet

-rw-r--r-- 1 tspann staff 9733 Dec 16 15:41 00000-1-951a37fc-5069-4201-94fa-4ef9975f6293-00012.parquet

-rw-r--r-- 1 tspann staff 9637 Dec 16 15:39 00000-1-951a37fc-5069-4201-94fa-4ef9975f6293-00011.parquet

-rw-r--r-- 1 tspann staff 9722 Dec 16 15:37 00000-1-951a37fc-5069-4201-94fa-4ef9975f6293-00010.parquet

-rw-r--r-- 1 tspann staff 9663 Dec 16 15:35 00000-1-951a37fc-5069-4201-94fa-4ef9975f6293-00009.parquet

-rw-r--r-- 1 tspann staff 9671 Dec 16 15:33 00000-1-951a37fc-5069-4201-94fa-4ef9975f6293-00008.parquet

-rw-r--r-- 1 tspann staff 9652 Dec 16 15:31 00000-1-951a37fc-5069-4201-94fa-4ef9975f6293-00007.parquet

-rw-r--r-- 1 tspann staff 9716 Dec 16 15:29 00000-1-951a37fc-5069-4201-94fa-4ef9975f6293-00006.parquet

-rw-r--r-- 1 tspann staff 9731 Dec 16 15:27 00000-1-951a37fc-5069-4201-94fa-4ef9975f6293-00005.parquet

-rw-r--r-- 1 tspann staff 9639 Dec 16 15:25 00000-1-951a37fc-5069-4201-94fa-4ef9975f6293-00004.parquet

-rw-r--r-- 1 tspann staff 9721 Dec 16 15:23 00000-1-951a37fc-5069-4201-94fa-4ef9975f6293-00003.parquet

-rw-r--r-- 1 tspann staff 7414 Dec 16 15:21 00000-1-951a37fc-5069-4201-94fa-4ef9975f6293-00002.parquet

-rw-r--r-- 1 tspann staff 8492 Dec 16 14:59 00000-1-951a37fc-5069-4201-94fa-4ef9975f6293-00001.parquet

-rw-r--r-- 1 tspann staff 7978 Dec 16 14:56 00000-1-2acfc6ba-4f49-44c7-8f17-1f3491484fd1-00001.parquet

-rw-r--r-- 1 tspann staff 7886 Dec 16 14:54 00000-1-7e714ed7-0ba5-41a4-b8e1-1e1d261e3b83-00001.parquet

Schema Embedded in Parquet File

{"type":"struct","schema-id":0,"fields":[{"id":1,"name":"uuid","required":true,"type":"string"},{"id":2,"name":"ipaddress","required":true,"type":"string"},{"id":3,"name":"cputempf","required":true,"type":"int"},{"id":4,"name":"runtime","required":true,"type":"int"},{"id":5,"name":"host","required":true,"type":"string"},{"id":6,"name":"hostname","required":true,"type":"string"},{"id":7,"name":"macaddress","required":true,"type":"string"},{"id":8,"name":"endtime","required":true,"type":"string"},{"id":9,"name":"te","required":true,"type":"string"},{"id":10,"name":"cpu","required":true,"type":"float"},{"id":11,"name":"diskusage","required":true,"type":"string"},{"id":12,"name":"memory","required":true,"type":"float"},{"id":13,"name":"rowid","required":true,"type":"string"},{"id":14,"name":"systemtime","required":true,"type":"string"},{"id":15,"name":"ts","required":true,"type":"int"},{"id":16,"name":"starttime","required":true,"type":"string"},{"id":17,"name":"datetimestamp","required":true,"type":"string"},{"id":18,"name":"temperature","required":true,"type":"float"},{"id":19,"name":"humidity","required":true,"type":"float"},{"id":20,"name":"co2","required":true,"type":"float"},{"id":21,"name":"totalvocppb","required":true,"type":"float"},{"id":22,"name":"equivalentco2ppm","required":true,"type":"float"},{"id":23,"name":"pressure","required":true,"type":"float"},{"id":24,"name":"temperatureicp","required":true,"type":"float"}]}Jparquet-mr version 1.12.0 (build db75a6815f2ba1d1ee89d1a90aeb296f1f3a8f20)

Validate our Parquet Files

pip3.9 install parquet-tools -U

parquet-tools inspect ice_sink_thermal/data/00000-1-7e714ed7-0ba5-41a4-b8e1-1e1d261e3b83-00001.parquet

############ file meta data ############

created_by: parquet-mr version 1.12.0 (build db75a6815f2ba1d1ee89d1a90aeb296f1f3a8f20)

num_columns: 24

num_rows: 4

num_row_groups: 1

format_version: 1.0

serialized_size: 4577

############ Columns ############

uuid

ipaddress

cputempf

runtime

host

hostname

macaddress

endtime

te

cpu

diskusage

memory

rowid

systemtime

ts

starttime

datetimestamp

temperature

humidity

co2

totalvocppb

equivalentco2ppm

pressure

temperatureicp

############ Column(uuid) ############

name: uuid

path: uuid

max_definition_level: 0

max_repetition_level: 0

physical_type: BYTE_ARRAY

logical_type: String

converted_type (legacy): UTF8

compression: GZIP (space_saved: 28%)

############ Column(ipaddress) ############

name: ipaddress

path: ipaddress

max_definition_level: 0

max_repetition_level: 0

physical_type: BYTE_ARRAY

logical_type: String

converted_type (legacy): UTF8

compression: GZIP (space_saved: -67%)

############ Column(cputempf) ############

name: cputempf

path: cputempf

max_definition_level: 0

max_repetition_level: 0

physical_type: INT32

logical_type: None

converted_type (legacy): NONE

compression: GZIP (space_saved: -38%)

############ Column(runtime) ############

name: runtime

path: runtime

max_definition_level: 0

max_repetition_level: 0

physical_type: INT32

logical_type: None

converted_type (legacy): NONE

compression: GZIP (space_saved: -85%)

############ Column(host) ############

name: host

path: host

max_definition_level: 0

max_repetition_level: 0

physical_type: BYTE_ARRAY

logical_type: String

converted_type (legacy): UTF8

compression: GZIP (space_saved: -74%)

############ Column(hostname) ############

name: hostname

path: hostname

max_definition_level: 0

max_repetition_level: 0

physical_type: BYTE_ARRAY

logical_type: String

converted_type (legacy): UTF8

compression: GZIP (space_saved: -74%)

############ Column(macaddress) ############

name: macaddress

path: macaddress

max_definition_level: 0

max_repetition_level: 0

physical_type: BYTE_ARRAY

logical_type: String

converted_type (legacy): UTF8

compression: GZIP (space_saved: -62%)

############ Column(endtime) ############

name: endtime

path: endtime

max_definition_level: 0

max_repetition_level: 0

physical_type: BYTE_ARRAY

logical_type: String

converted_type (legacy): UTF8

compression: GZIP (space_saved: 17%)

############ Column(te) ############

name: te

path: te

max_definition_level: 0

max_repetition_level: 0

physical_type: BYTE_ARRAY

logical_type: String

converted_type (legacy): UTF8

compression: GZIP (space_saved: 16%)

############ Column(cpu) ############

name: cpu

path: cpu

max_definition_level: 0

max_repetition_level: 0

physical_type: FLOAT

logical_type: None

converted_type (legacy): NONE

compression: GZIP (space_saved: -75%)

############ Column(diskusage) ############

name: diskusage

path: diskusage

max_definition_level: 0

max_repetition_level: 0

physical_type: BYTE_ARRAY

logical_type: String

converted_type (legacy): UTF8

compression: GZIP (space_saved: -69%)

############ Column(memory) ############

name: memory

path: memory

max_definition_level: 0

max_repetition_level: 0

physical_type: FLOAT

logical_type: None

converted_type (legacy): NONE

compression: GZIP (space_saved: -85%)

############ Column(rowid) ############

name: rowid

path: rowid

max_definition_level: 0

max_repetition_level: 0

physical_type: BYTE_ARRAY

logical_type: String

converted_type (legacy): UTF8

compression: GZIP (space_saved: 33%)

############ Column(systemtime) ############

name: systemtime

path: systemtime

max_definition_level: 0

max_repetition_level: 0

physical_type: BYTE_ARRAY

logical_type: String

converted_type (legacy): UTF8

compression: GZIP (space_saved: 33%)

############ Column(ts) ############

name: ts

path: ts

max_definition_level: 0

max_repetition_level: 0

physical_type: INT32

logical_type: None

converted_type (legacy): NONE

compression: GZIP (space_saved: -41%)

############ Column(starttime) ############

name: starttime

path: starttime

max_definition_level: 0

max_repetition_level: 0

physical_type: BYTE_ARRAY

logical_type: String

converted_type (legacy): UTF8

compression: GZIP (space_saved: 33%)

############ Column(datetimestamp) ############

name: datetimestamp

path: datetimestamp

max_definition_level: 0

max_repetition_level: 0

physical_type: BYTE_ARRAY

logical_type: String

converted_type (legacy): UTF8

compression: GZIP (space_saved: 37%)

############ Column(temperature) ############

name: temperature

path: temperature

max_definition_level: 0

max_repetition_level: 0

physical_type: FLOAT

logical_type: None

converted_type (legacy): NONE

compression: GZIP (space_saved: -51%)

############ Column(humidity) ############

name: humidity

path: humidity

max_definition_level: 0

max_repetition_level: 0

physical_type: FLOAT

logical_type: None

converted_type (legacy): NONE

compression: GZIP (space_saved: -49%)

############ Column(co2) ############

name: co2

path: co2

max_definition_level: 0

max_repetition_level: 0

physical_type: FLOAT

logical_type: None

converted_type (legacy): NONE

compression: GZIP (space_saved: -51%)

############ Column(totalvocppb) ############

name: totalvocppb

path: totalvocppb

max_definition_level: 0

max_repetition_level: 0

physical_type: FLOAT

logical_type: None

converted_type (legacy): NONE

compression: GZIP (space_saved: -85%)

############ Column(equivalentco2ppm) ############

name: equivalentco2ppm

path: equivalentco2ppm

max_definition_level: 0

max_repetition_level: 0

physical_type: FLOAT

logical_type: None

converted_type (legacy): NONE

compression: GZIP (space_saved: -75%)

############ Column(pressure) ############

name: pressure

path: pressure

max_definition_level: 0

max_repetition_level: 0

physical_type: FLOAT

logical_type: None

converted_type (legacy): NONE

compression: GZIP (space_saved: -49%)

############ Column(temperatureicp) ############

name: temperatureicp

path: temperatureicp

max_definition_level: 0

max_repetition_level: 0

physical_type: FLOAT

logical_type: None

converted_type (legacy): NONE

compression: GZIP (space_saved: -85%)Setup

- Download Spark 3.2_2.12

- Download iceberg-spark-runtime-3.2_2.12:1.1.0

Run Spark Shell

bin/spark-sql --packages org.apache.iceberg:iceberg-spark-runtime-3.2_2.12:1.1.0\

--conf spark.sql.extensions=org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions \

--conf spark.sql.catalog.spark_catalog=org.apache.iceberg.spark.SparkSessionCatalog \

--conf spark.sql.catalog.spark_catalog.type=hive \

--conf spark.sql.catalog.local=org.apache.iceberg.spark.SparkCatalog \

--conf spark.sql.catalog.local.type=hadoop \

--conf spark.sql.catalog.local.warehouse=/Users/tspann/Downloads/iceberg/iceberg_sink_testSpark Shell

desc local.ice_sink_thermal;

uuid string

ipaddress string

cputempf int

runtime int

host string

hostname string

macaddress string

endtime string

te string

cpu float

diskusage string

memory float

rowid string

systemtime string

ts int

starttime string

datetimestamp string

temperature float

humidity float

co2 float

totalvocppb float

equivalentco2ppm float

pressure float

temperatureicp float

# Partitioning

Not partitioned

select * from local.ice_sink_thermal limit 10;

thrml_zlq_20221216202731 192.168.1.179 122 0 thermal thermal e4:5f:01:7c:3f:34 1671222451.063736 0.0005979537963867188 11.1 101858.0 MB 10.0 20221216202731_e414e311-7928-4408-91de-b44666cd14db 12/16/2022 15:27:35 1671222455 12/16/2022 15:27:31 2022-12-16 20:27:34.827283+00:00 26.8868 31.76 771.0 14.0 405.0 99783.77 86.0

thrml_fpa_20221216202735 192.168.1.179 122 0 thermal thermal e4:5f:01:7c:3f:34 1671222455.8950574 0.00046181678771972656 5.5 101858.0 MB 10.0 20221216202735_92a2af14-ebcb-42fd-a935-5a01a47ff95e 12/16/2022 15:27:40 1671222460 12/16/2022 15:27:35 2022-12-16 20:27:39.659337+00:00 26.8761 31.74 771.0 6.0 400.0 99784.8 86.0

thrml_gpv_20221216202740 192.168.1.179 122 0 thermal thermal e4:5f:01:7c:3f:34 1671222460.7061012 0.0006053447723388672 5.5 101858.0 MB 10.0 20221216202740_68f88218-1b40-4f0a-85e3-3fb8f447d65b 12/16/2022 15:27:45 1671222465 12/16/2022 15:27:40 2022-12-16 20:27:44.369295+00:00 26.8761 31.72 770.0 7.0 65535.0 99782.18 85.0

thrml_gxu_20221216202745 192.168.1.179 121 0 thermal thermal e4:5f:01:7c:3f:34 1671222465.500708 0.0006241798400878906 6.0 101858.0 MB 10.0 20221216202745_28685c57-88f5-422b-be23-b32ea12a0d75 12/16/2022 15:27:50 1671222470 12/16/2022 15:27:45 2022-12-16 20:27:49.161722+00:00 26.8681 31.83 771.0 12.0 65535.0 99778.97 85.0

thrml_nyn_20221216202750 192.168.1.179 122 0 thermal thermal e4:5f:01:7c:3f:34 1671222470.212329 0.0006175041198730469 5.5 101858.0 MB 10.0 20221216202750_bbbb7fa7-cebf-4414-b538-30ce84828cea 12/16/2022 15:27:55 1671222475 12/16/2022 15:27:50 2022-12-16 20:27:53.976872+00:00 26.8601 31.78 771.0 6.0 65535.0 99783.55 86.0

thrml_oxl_20221216202755 192.168.1.179 123 0 thermal thermal e4:5f:01:7c:3f:34 1671222475.1313043 0.0006723403930664062 6.6 101858.0 MB 10.0 20221216202755_bda36e1c-246f-4d99-8b39-b558111a1d9e 12/16/2022 15:27:59 1671222479 12/16/2022 15:27:55 2022-12-16 20:27:58.794626+00:00 26.8307 31.73 771.0 7.0 65535.0 99785.26 86.0

thrml_rvg_20221216202759 192.168.1.179 121 0 thermal thermal e4:5f:01:7c:3f:34 1671222479.8391178 0.00048804283142089844 5.5 101858.0 MB 10.4 20221216202759_25b8ee3b-6b59-42e6-8c4c-a8419b76ea40 12/16/2022 15:28:04 1671222484 12/16/2022 15:27:59 2022-12-16 20:28:03.601685+00:00 26.8441 31.76 771.0 6.0 406.0 99786.36 86.0

thrml_wbl_20221216202804 192.168.1.179 123 0 thermal thermal e4:5f:01:7c:3f:34 1671222484.6672618 0.0006029605865478516 5.5 101858.0 MB 10.0 20221216202804_7bd3de16-dbcd-4107-8ab0-b184a2eaf523 12/16/2022 15:28:09 1671222489 12/16/2022 15:28:04 2022-12-16 20:28:08.431971+00:00 26.8842 31.79 770.0 9.0 65535.0 99787.27 86.0

thrml_vwj_20221216202809 192.168.1.179 122 0 thermal thermal e4:5f:01:7c:3f:34 1671222489.4752936 0.0006010532379150391 9.9 101858.0 MB 10.0 20221216202809_fed14b6d-b211-48ad-b31f-0a486b8d0f0d 12/16/2022 15:28:14 1671222494 12/16/2022 15:28:09 2022-12-16 20:28:13.137929+00:00 26.9002 31.78 770.0 5.0 400.0 99782.4 86.0

thrml_oox_20221216202814 192.168.1.179 123 0 thermal thermal e4:5f:01:7c:3f:34 1671222494.2805836 0.0005502700805664062 4.8 101858.0 MB 10.0 20221216202814_89526e7c-980b-4dbe-8257-c1c6944cdbd3 12/16/2022 15:28:18 1671222498 12/16/2022 15:28:14 2022-12-16 20:28:17.943010+00:00 26.9162 31.72 769.0 1.0 65535.0 99789.35 86.0

Time taken: 0.835 seconds, Fetched 10 row(s)

select uuid, ts, datetimestamp, co2, humidity, pressure, temperature

from local.ice_sink_thermal limit 10;

thrml_rwa_20221216203730 1671223055 2022-12-16 20:37:34.204439+00:00 783.0 32.22 99792.16 26.7613

thrml_qqs_20221216203735 1671223060 2022-12-16 20:37:39.013362+00:00 783.0 32.23 99791.39 26.78

thrml_szi_20221216203740 1671223064 2022-12-16 20:37:43.755430+00:00 784.0 32.14 99794.28 26.7934

thrml_rvb_20221216203744 1671223069 2022-12-16 20:37:48.563791+00:00 784.0 32.16 99796.71 26.8147

thrml_rto_20221216203749 1671223074 2022-12-16 20:37:53.373391+00:00 783.0 32.11 99796.0 26.8575

thrml_svv_20221216203754 1671223079 2022-12-16 20:37:58.184190+00:00 783.0 32.07 99791.21 26.8842

thrml_aov_20221216203759 1671223084 2022-12-16 20:38:02.991664+00:00 782.0 31.95 99794.04 26.9082

thrml_tzs_20221216203804 1671223088 2022-12-16 20:38:07.802992+00:00 782.0 32.02 99794.33 26.9322

thrml_fso_20221216203808 1671223093 2022-12-16 20:38:12.613666+00:00 783.0 32.05 99792.42 26.9589

thrml_czp_20221216203813 1671223098 2022-12-16 20:38:17.321001+00:00 783.0 31.98 99790.26 26.9803

Time taken: 0.898 seconds, Fetched 10 row(s)

References

- https://github.com/tspannhw/FLiP-Pi-DeltaLake-Thermal

- https://iceberg.apache.org/docs/latest/getting-started/

- https://github.com/streamnative/pulsar-io-lakehouse/blob/master/docs/lakehouse-sink.md

- https://streamnative.io/blog/release/2022-12-14-announcing-the-iceberg-sink-connector-for-apache-pulsar/

- https://hub.streamnative.io/connectors/lakehouse-sink/v2.10.1.12/

- https://github.com/tspannhw/FLiP-Pi-Thermal

- https://dzone.com/articles/pulsar-in-python-on-pi

- https://github.com/tabular-io/docker-spark-iceberg

- https://iceberg.apache.org/docs/latest/getting-started/

- https://stackoverflow.com/questions/73791829/delta-lake-sink-connector-for-apache-pulsar-with-minio-throws-java-lang-illegal

- https://thenewstack.io/apache-iceberg-a-different-table-design-for-big-data/

2022/2023 - Tim Spann - @PaaSDev

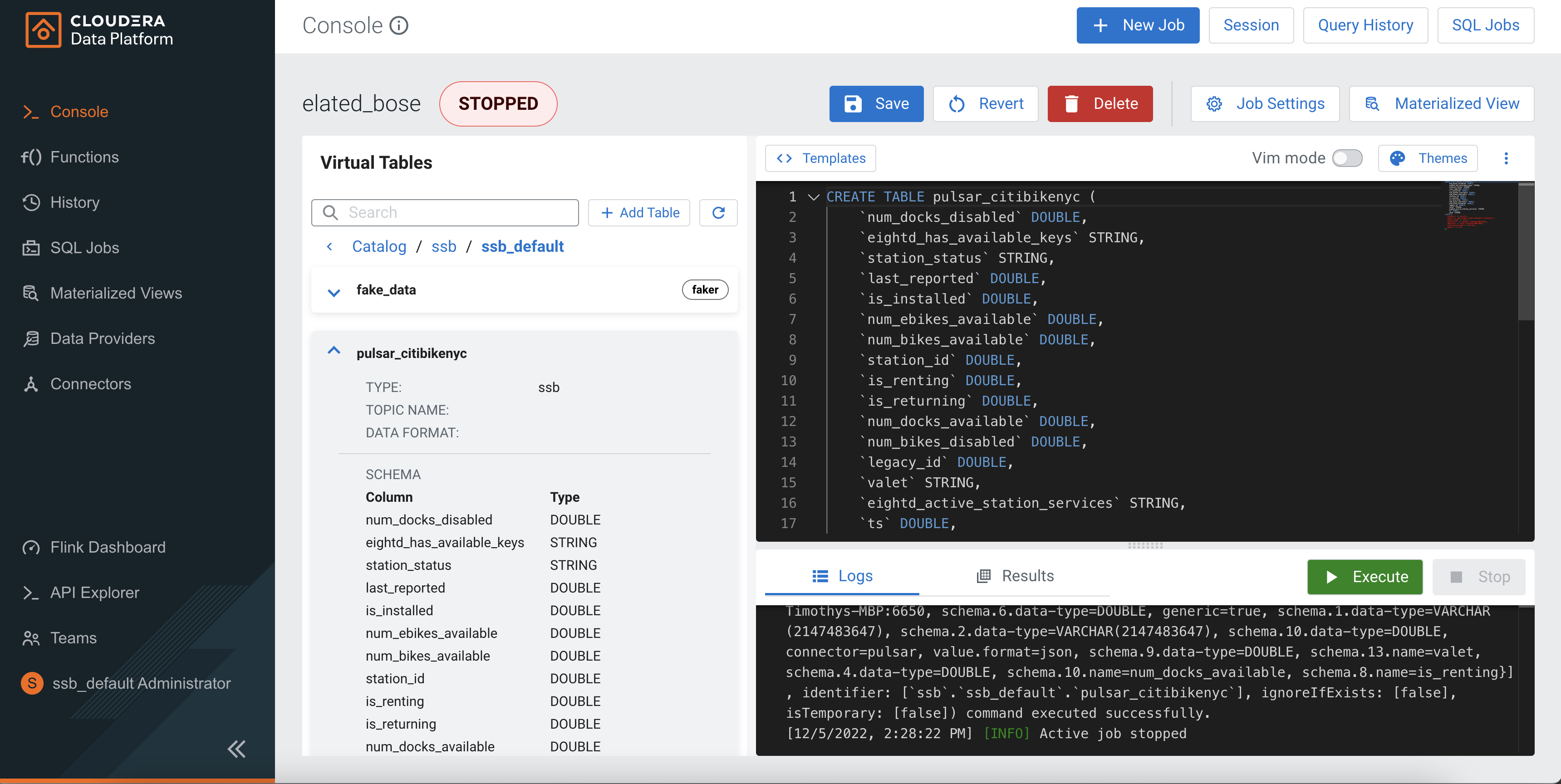

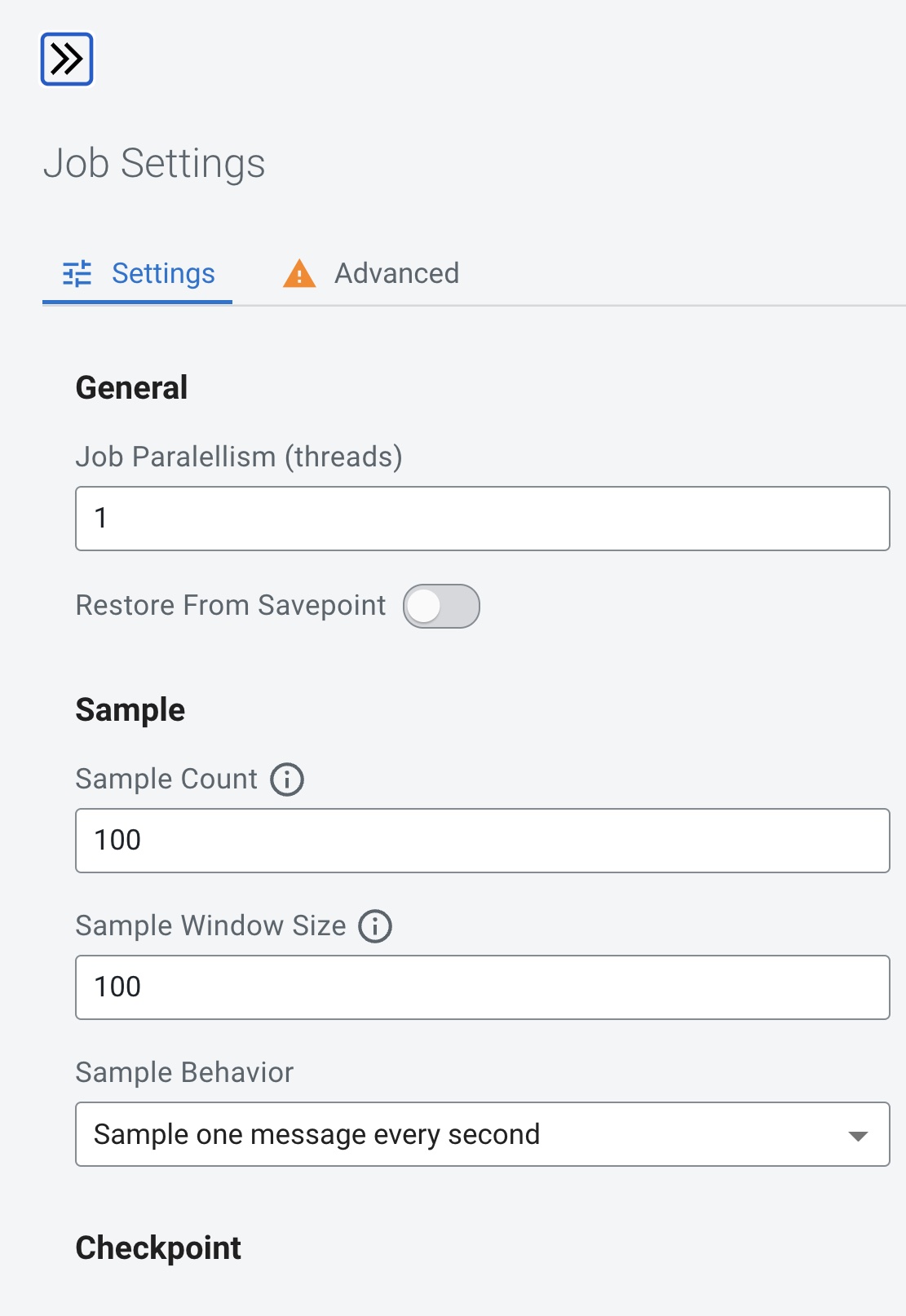

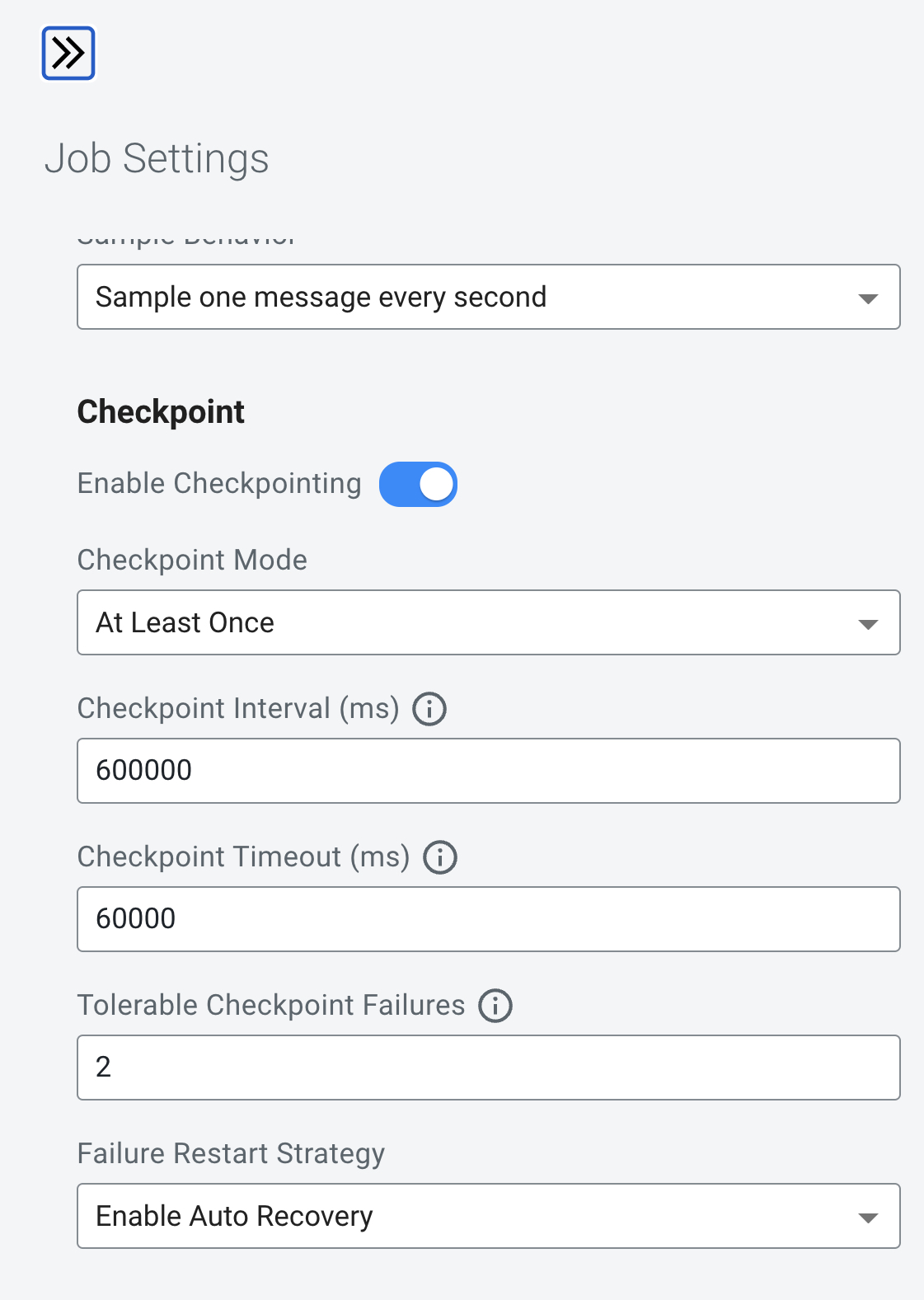

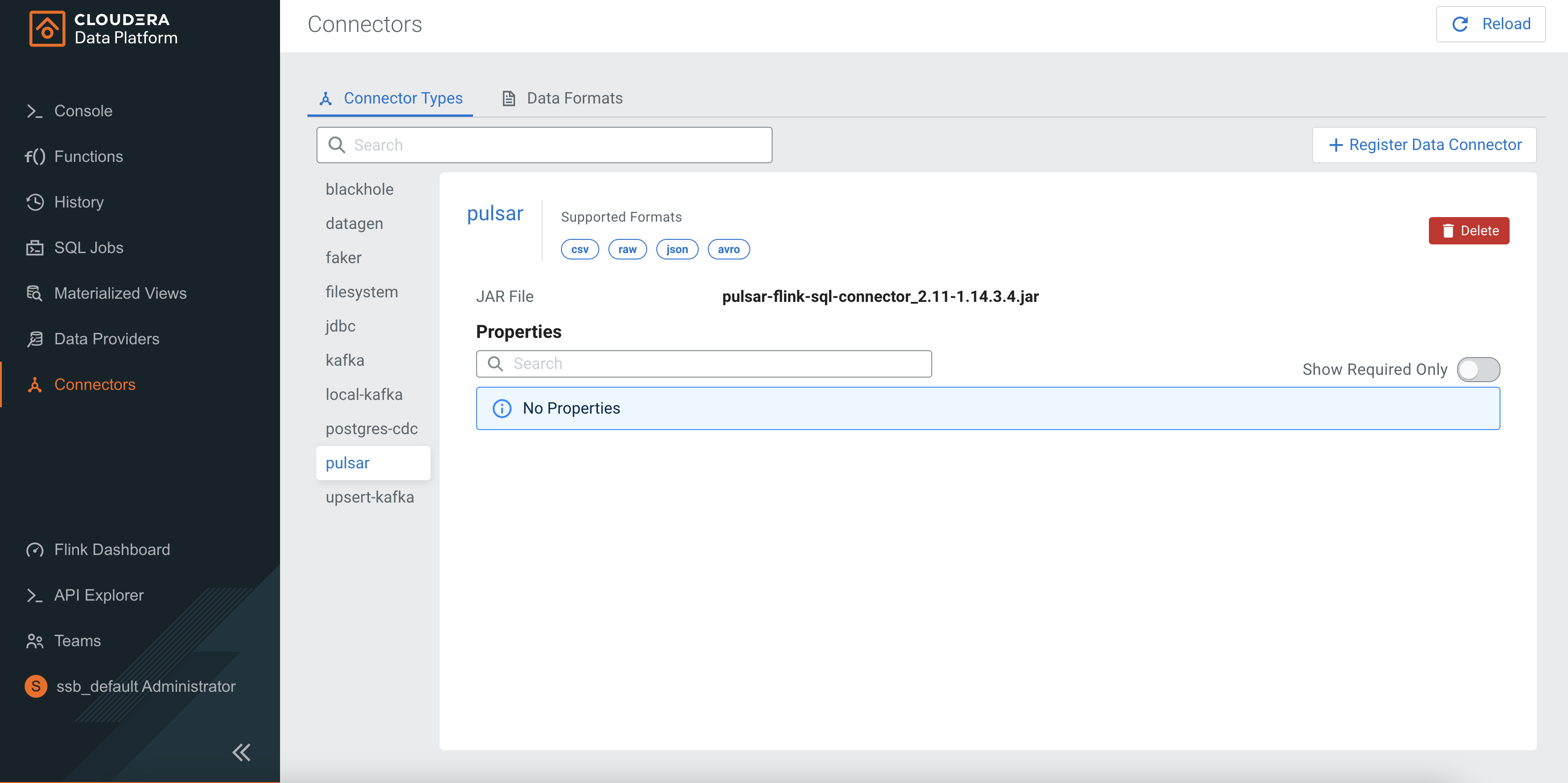

Building Streaming Applications with Cloudera SQL Stream Builder and Apache Pulsar

Cloudera CSP CE Plus Apache Pulsar

Integration

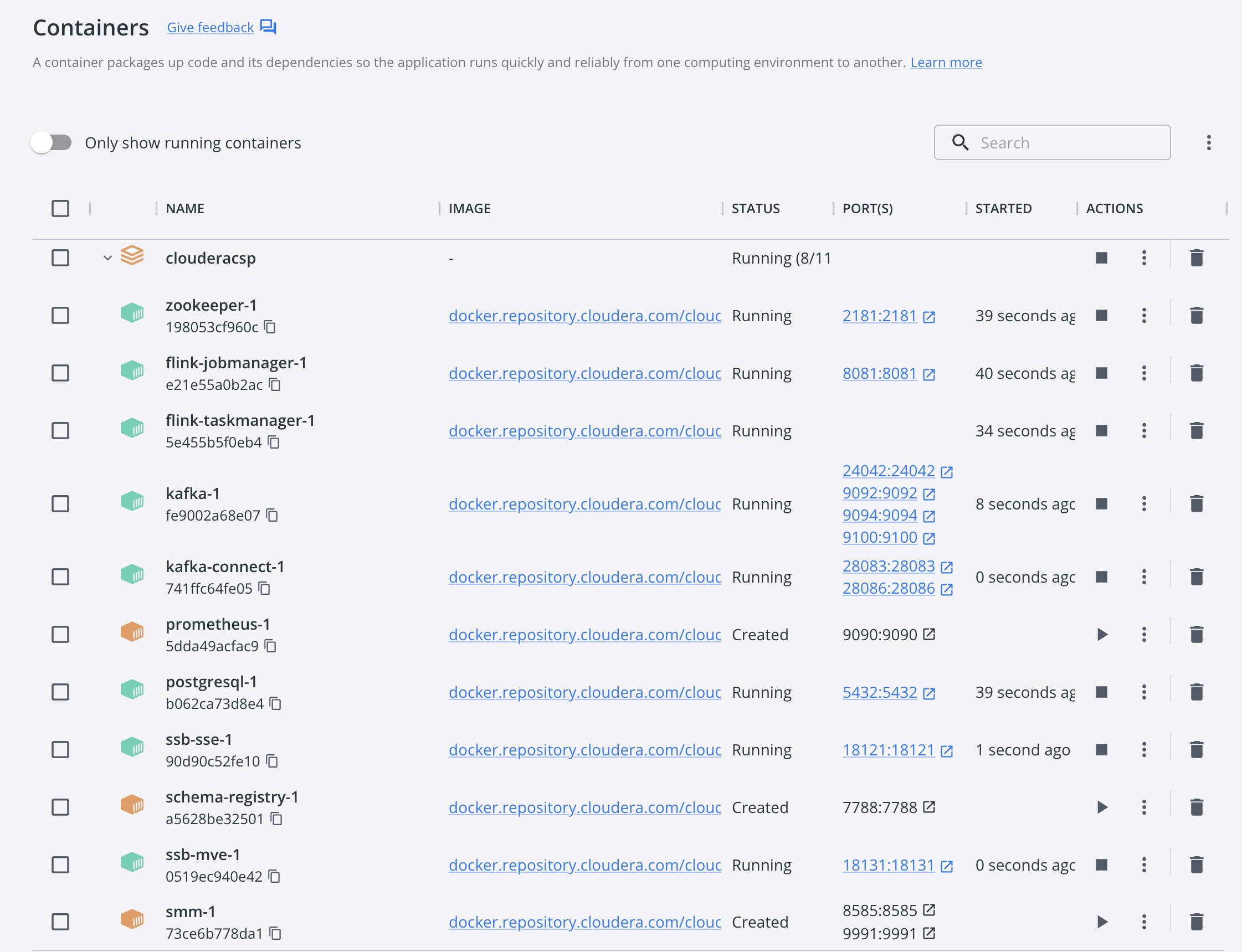

Once running in docker

http://localhost:18121/ui/login

Login

admin/admin

SQL Testing

CREATE TABLE pulsar_test (

`city` STRING

) WITH (

'connector' = 'pulsar',

'topic' = 'topic82547611',

'value.format' = 'raw',

'service-url' = 'pulsar://Timothys-MBP:6650',

'admin-url' = 'http://Timothys-MBP:8080',

'scan.startup.mode' = 'earliest',

'generic' = 'true'

);

CREATE TABLE `pulsar_table_1670269295` (

`col_str` STRING,

`col_int` INT,

`col_ts` TIMESTAMP(3),

WATERMARK FOR `col_ts` AS col_ts - INTERVAL '5' SECOND

) WITH (

'format' = 'json' -- Data format

-- 'json.encode.decimal-as-plain-number' = 'false' -- Optional flag to specify whether to encode all decimals as plain numbers instead of possible scientific notations, false by default.

-- 'json.fail-on-missing-field' = 'false' -- Optional flag to specify whether to fail if a field is missing or not, false by default.

-- 'json.ignore-parse-errors' = 'false' -- Optional flag to skip fields and rows with parse errors instead of failing; fields are set to null in case of errors, false by default.

-- 'json.map-null-key.literal' = 'null' -- Optional flag to specify string literal for null keys when 'map-null-key.mode' is LITERAL, \"null\" by default.

-- 'json.map-null-key.mode' = 'FAIL' -- Optional flag to control the handling mode when serializing null key for map data, FAIL by default. Option DROP will drop null key entries for map data. Option LITERAL will use 'map-null-key.literal' as key literal.

-- 'json.timestamp-format.standard' = 'SQL' -- Optional flag to specify timestamp format, SQL by default. Option ISO-8601 will parse input timestamp in \"yyyy-MM-ddTHH:mm:ss.s{precision}\" format and output timestamp in the same format. Option SQL will parse input timestamp in \"yyyy-MM-dd HH:mm:ss.s{precision}\" format and output timestamp in the same format.

);

CREATE TABLE pulsar_citibikenyc (

`num_docks_disabled` DOUBLE,

`eightd_has_available_keys` STRING,

`station_status` STRING,

`last_reported` DOUBLE,

`is_installed` DOUBLE,

`num_ebikes_available` DOUBLE,

`num_bikes_available` DOUBLE,

`station_id` DOUBLE,

`is_renting` DOUBLE,

`is_returning` DOUBLE,

`num_docks_available` DOUBLE,

`num_bikes_disabled` DOUBLE,

`legacy_id` DOUBLE,

`valet` STRING,

`eightd_active_station_services` STRING,

`ts` DOUBLE,

`uuid` STRING

) WITH (

'connector' = 'pulsar',

'topic' = 'persistent://public/default/citibikenyc',

'value.format' = 'json',

'service-url' = 'pulsar://Timothys-MBP:6650',

'admin-url' = 'http://Timothys-MBP:8080',

'scan.startup.mode' = 'earliest',

'generic' = 'true'

);

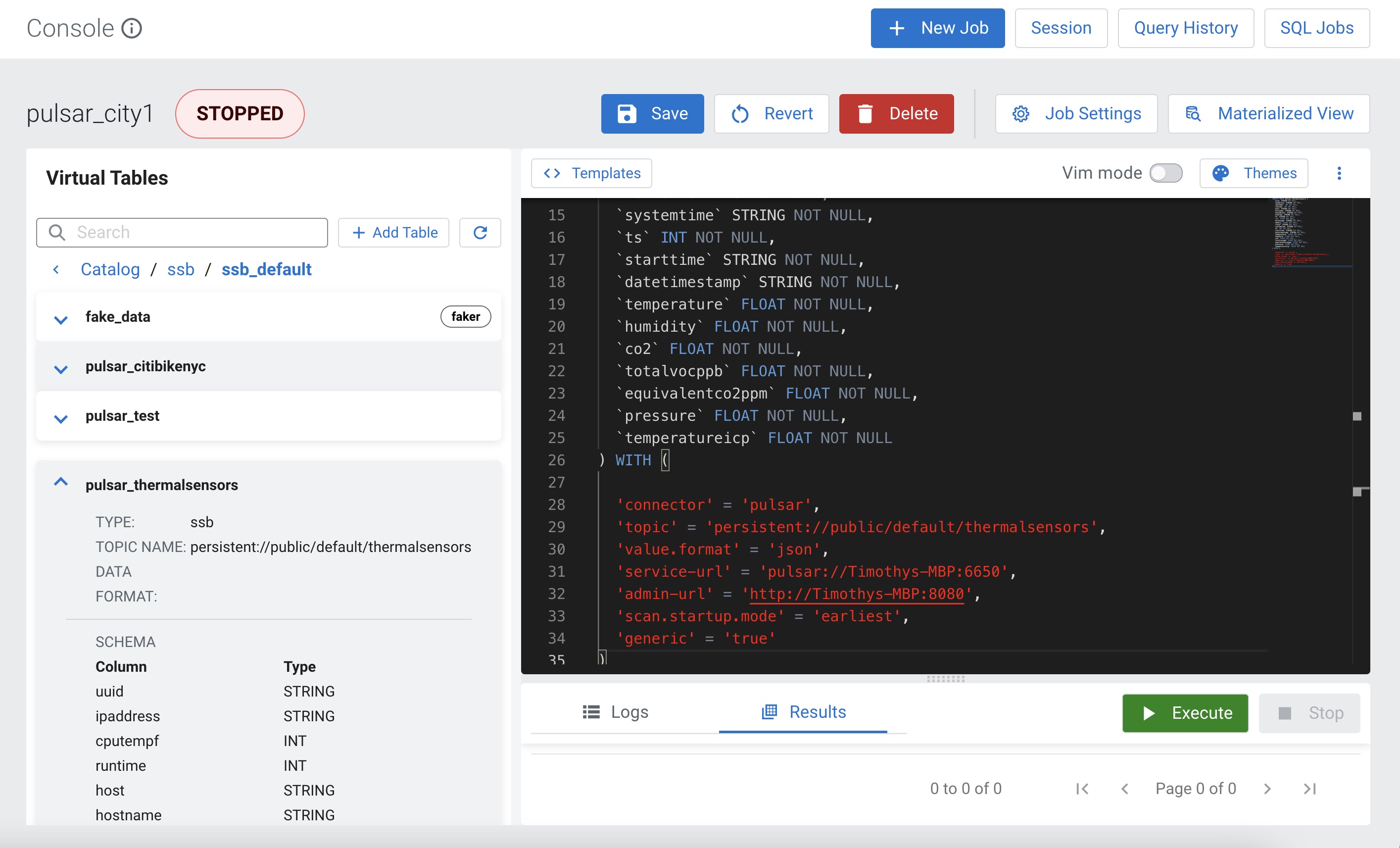

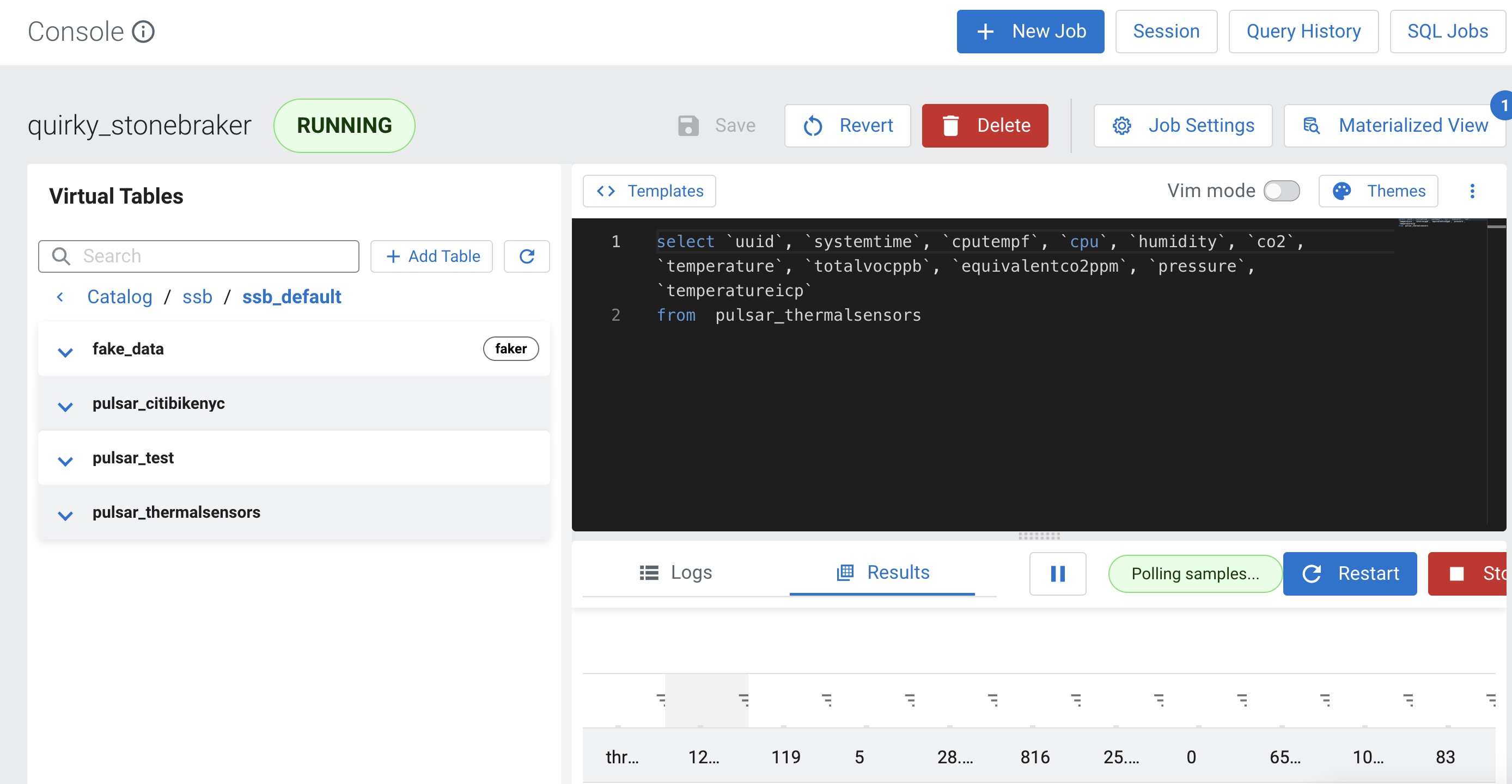

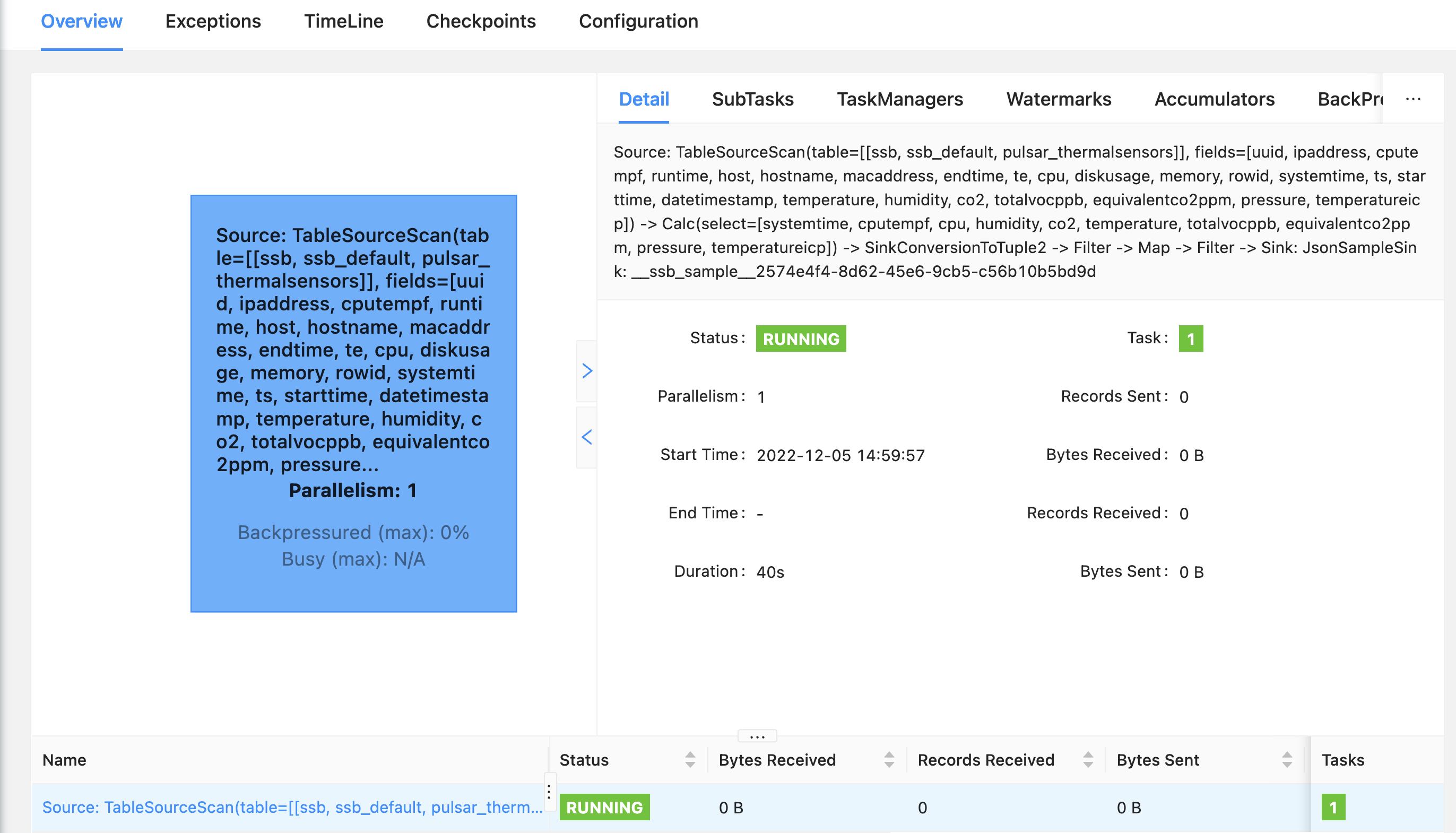

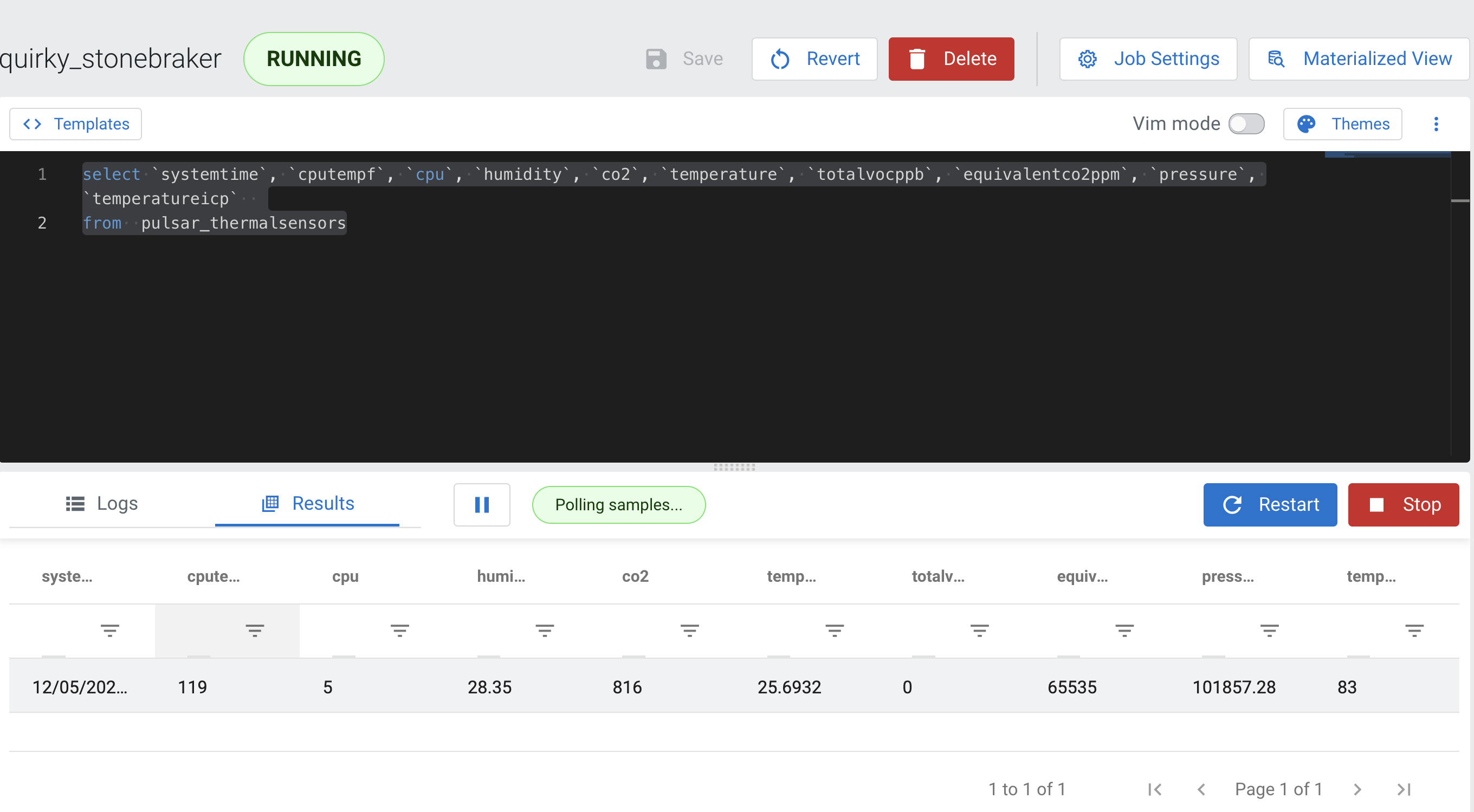

CREATE TABLE pulsar_thermalsensors (

`uuid` STRING NOT NULL,

`ipaddress` STRING NOT NULL,

`cputempf` INT NOT NULL,

`runtime` INT NOT NULL,

`host` STRING NOT NULL,

`hostname` STRING NOT NULL,

`macaddress` STRING NOT NULL,

`endtime` STRING NOT NULL,

`te` STRING NOT NULL,

`cpu` FLOAT NOT NULL,

`diskusage` STRING NOT NULL,

`memory` FLOAT NOT NULL,

`rowid` STRING NOT NULL,

`systemtime` STRING NOT NULL,

`ts` INT NOT NULL,

`starttime` STRING NOT NULL,

`datetimestamp` STRING NOT NULL,

`temperature` FLOAT NOT NULL,

`humidity` FLOAT NOT NULL,

`co2` FLOAT NOT NULL,

`totalvocppb` FLOAT NOT NULL,

`equivalentco2ppm` FLOAT NOT NULL,

`pressure` FLOAT NOT NULL,

`temperatureicp` FLOAT NOT NULL

) WITH (

'connector' = 'pulsar',

'topic' = 'persistent://public/default/thermalsensors',

'value.format' = 'json',

'service-url' = 'pulsar://Timothys-MBP:6650',

'admin-url' = 'http://Timothys-MBP:8080',

'scan.startup.mode' = 'earliest',

'generic' = 'true'

)

CREATE TABLE fake_data (

city STRING )

WITH (

'connector' = 'faker',

'rows-per-second' = '1',

'fields.city.expression' = '#{Address.city}'

);

insert into pulsar_test

select * from fake_data;

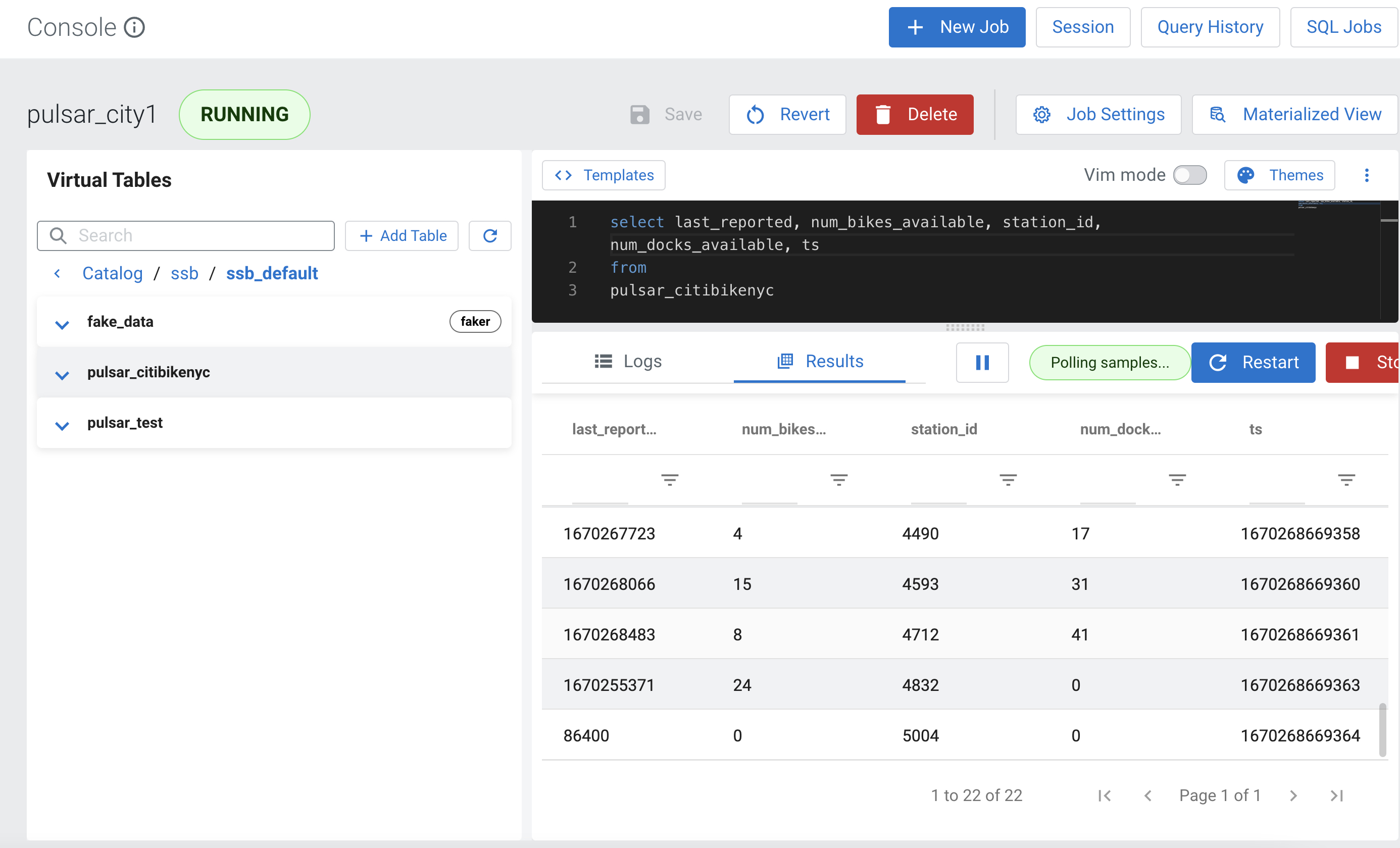

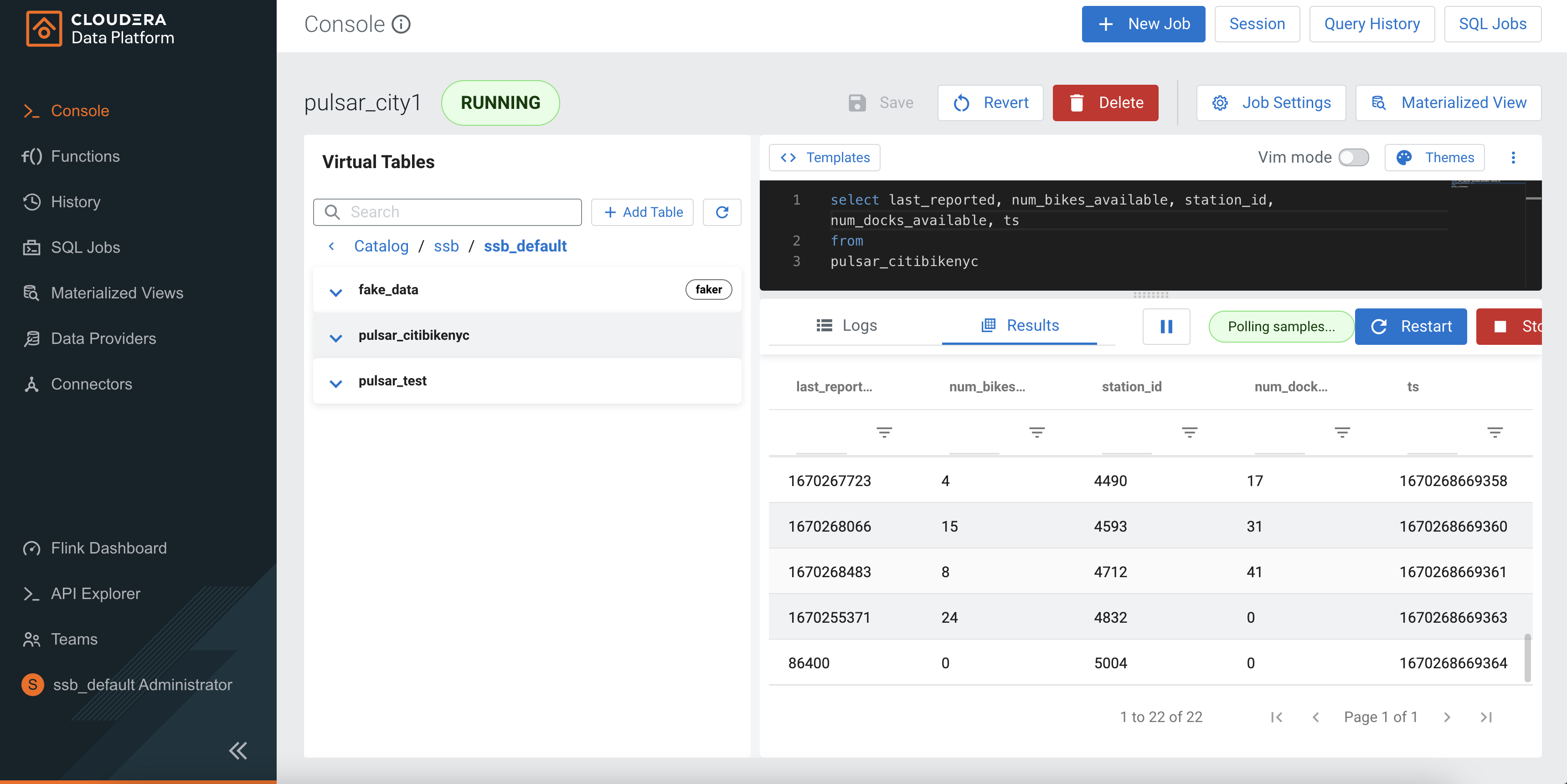

select last_reported, num_bikes_available, station_id, num_docks_available, ts

from

pulsar_citibikenyc;

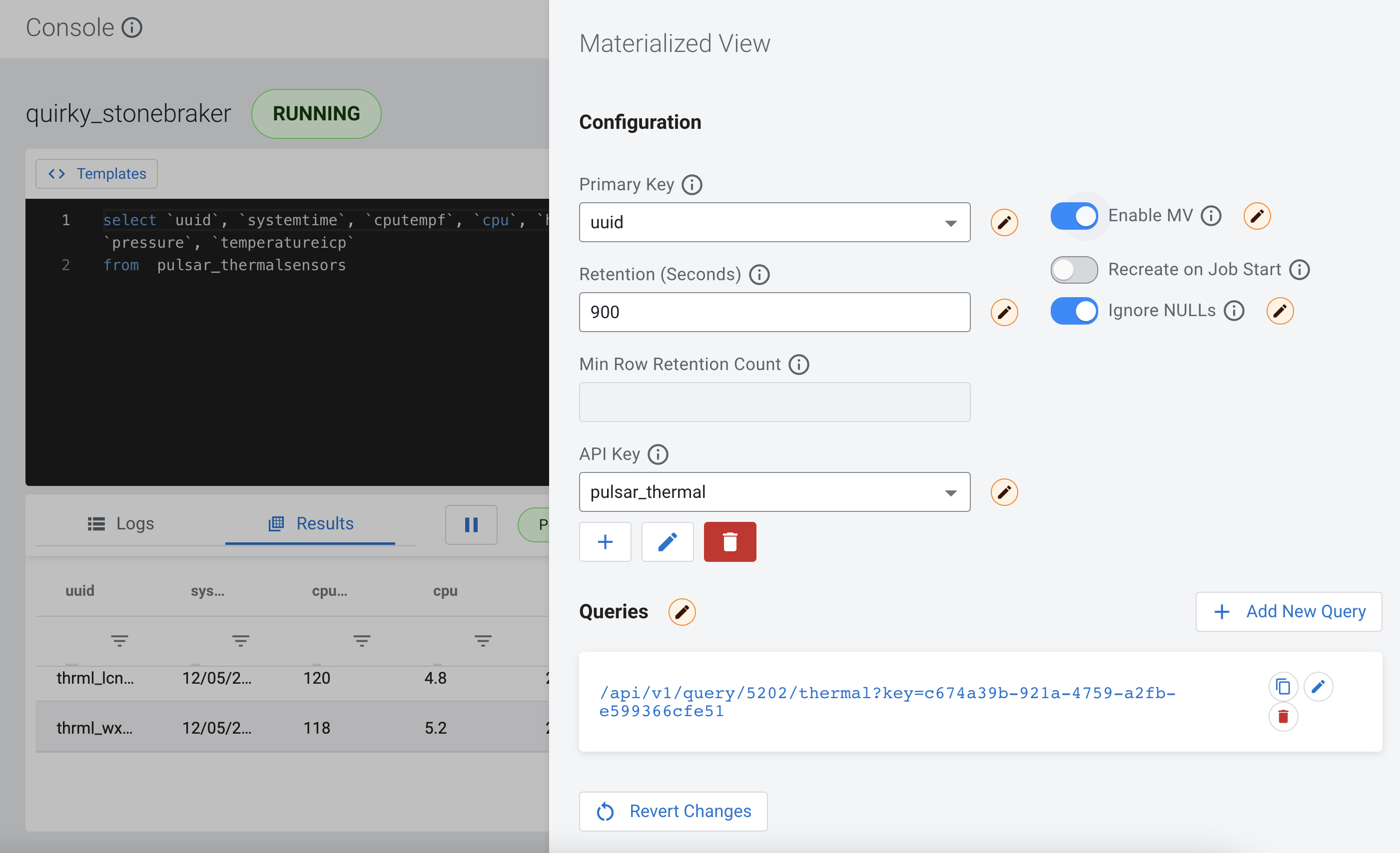

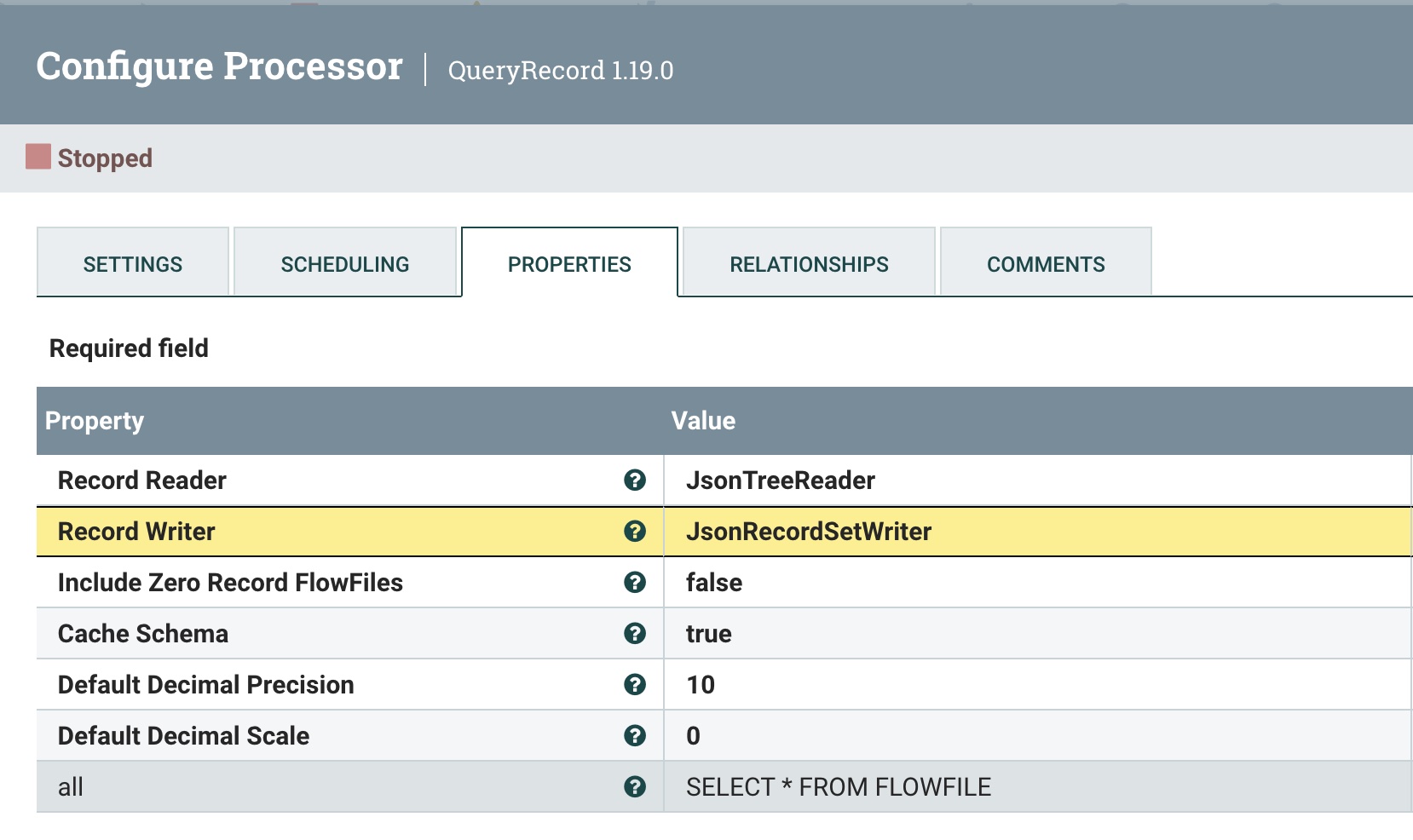

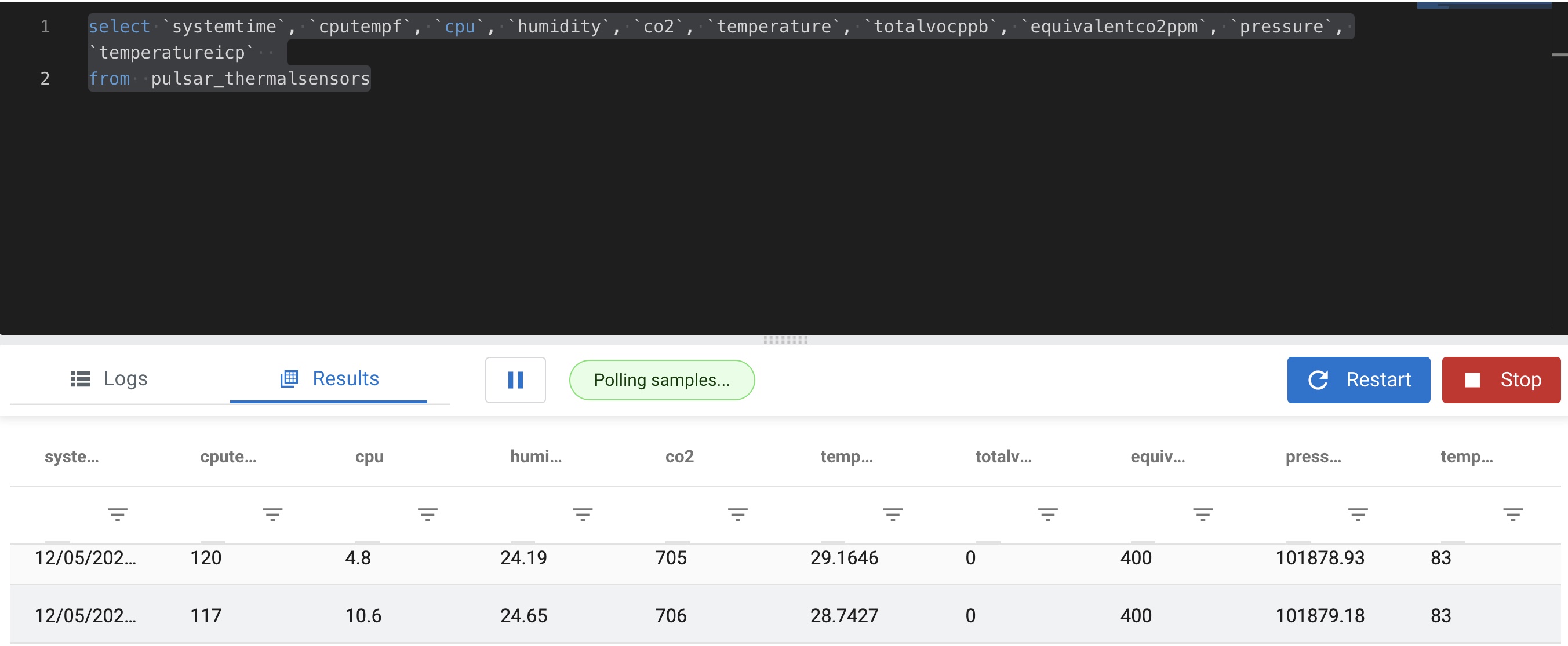

select `systemtime`, `cputempf`, `cpu`, `humidity`, `co2`, `temperature`, `totalvocppb`, `equivalentco2ppm`, `pressure`, `temperatureicp`

from pulsar_thermalsensors

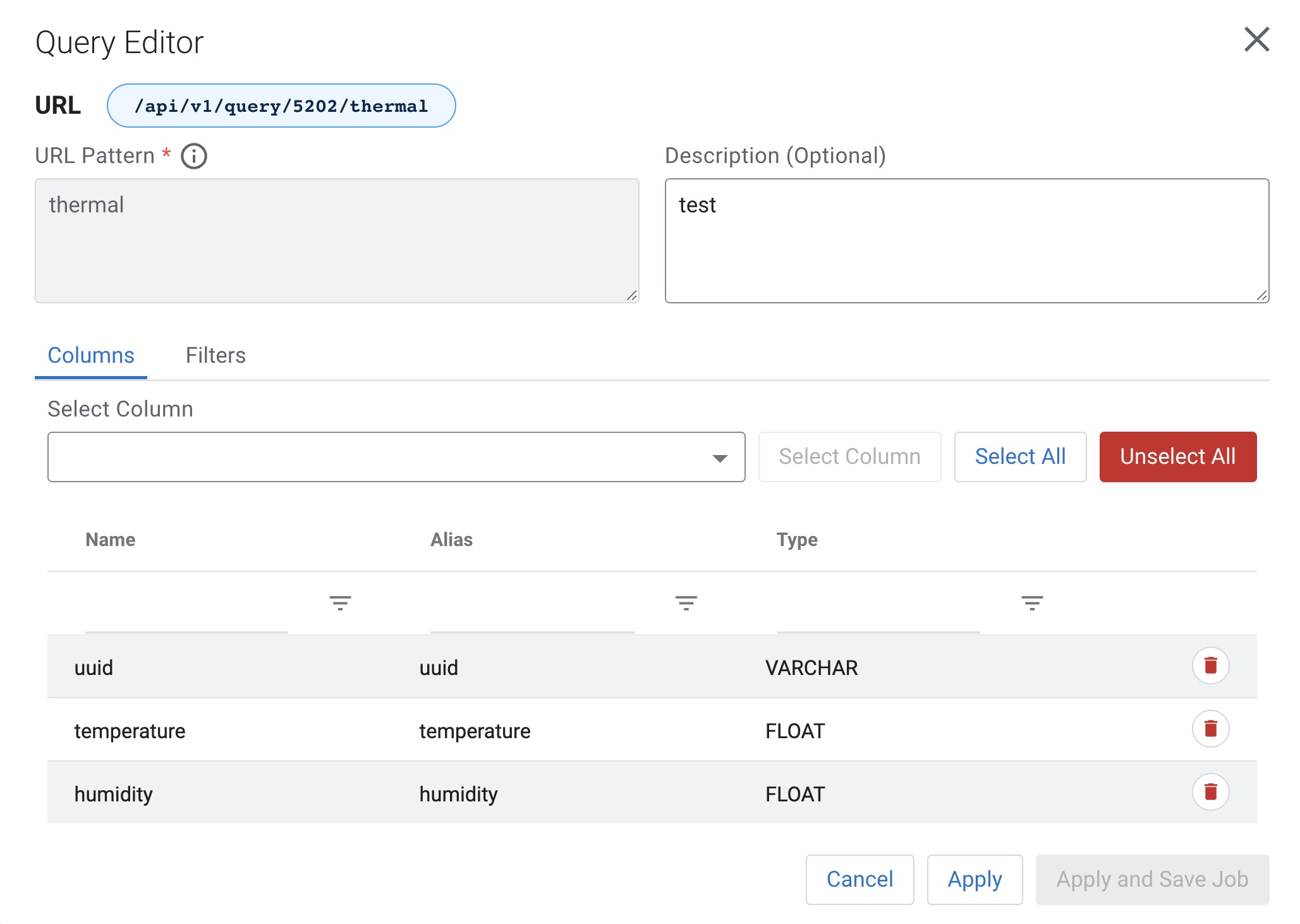

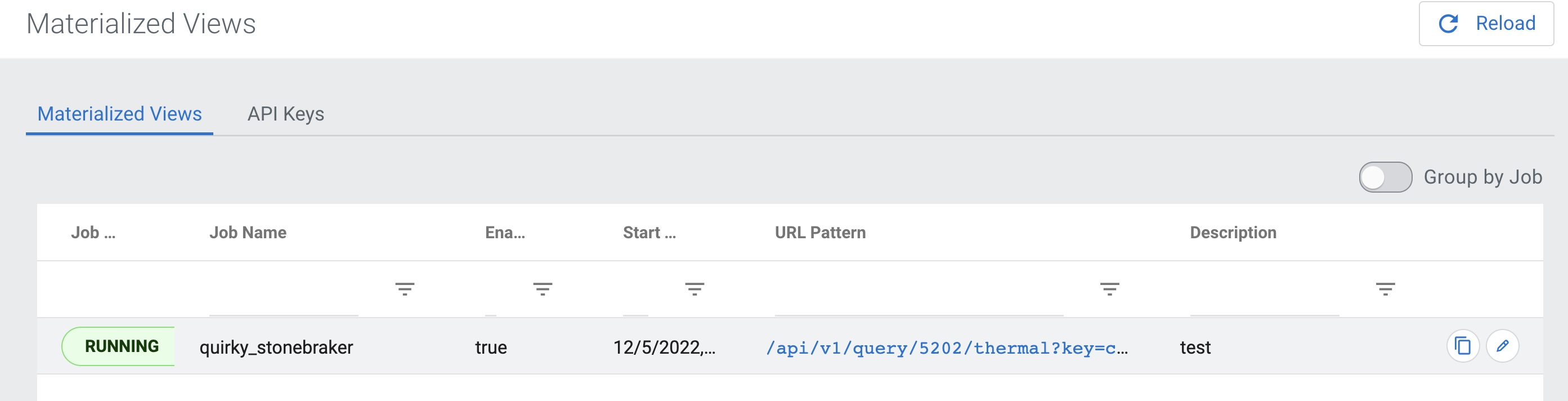

Create a Materialized View

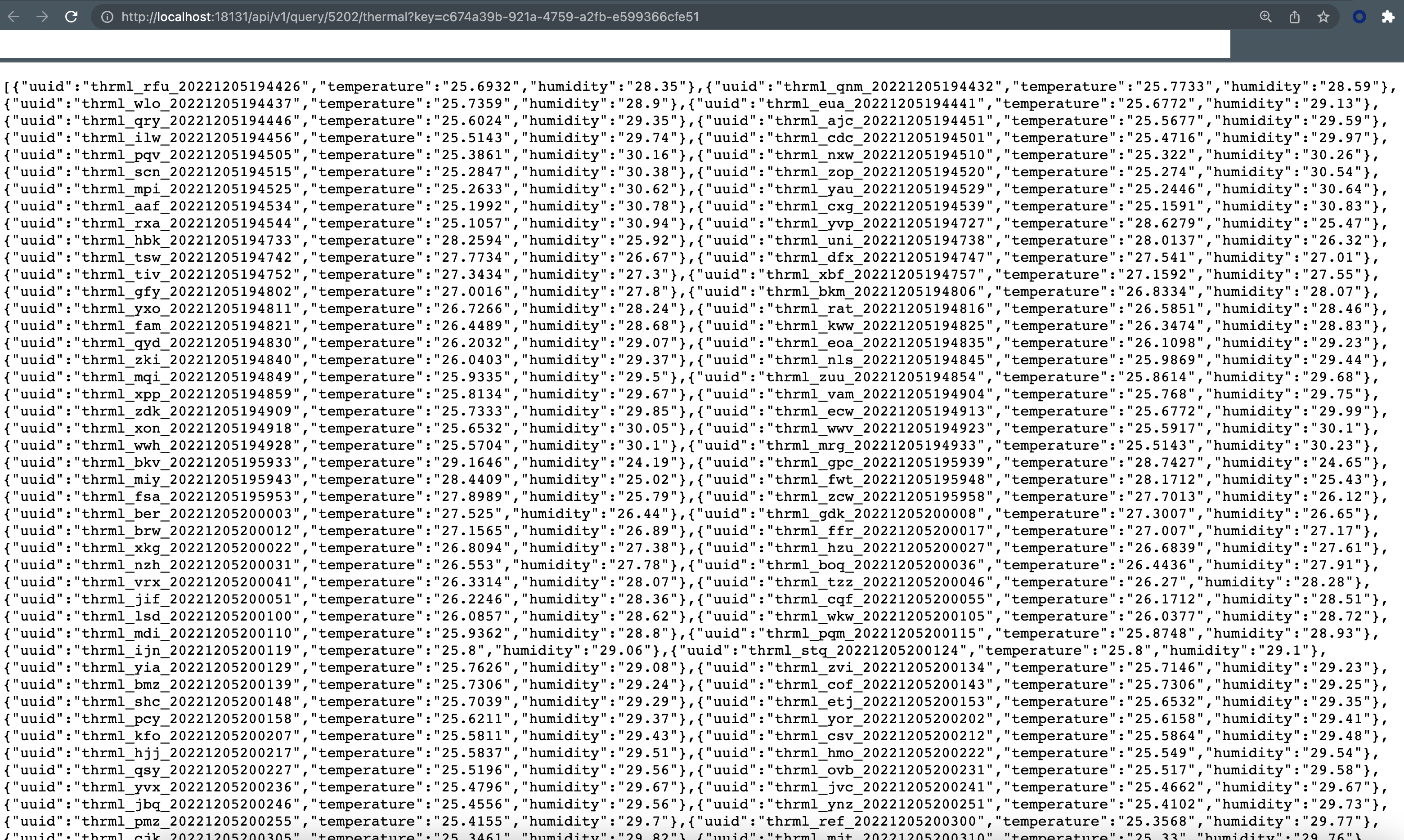

http://localhost:18131/api/v1/query/5202/thermal?key=c674a39b-921a-4759-a2fb-e599366cfe51

/api/v1/query/5202/thermal?key=c674a39b-921a-4759-a2fb-e599366cfe51

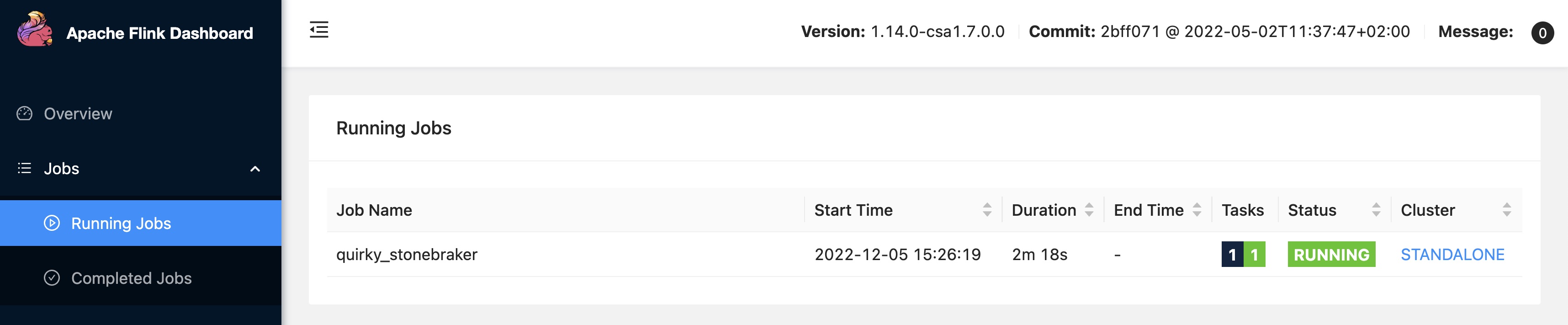

Running SQL Stream Builder (Flink SQL) against Pulsar

Containers Running Cloudera CSP CE in Docker

References

- https://github.com/tspannhw/create-nifi-pulsar-flink-apps

- https://github.com/streamnative/flink-example/blob/main/docker-compose.yml

- https://docs.cloudera.com/csp-ce/latest/index.html

Meetup

https://www.meetup.com/new-york-city-apache-pulsar-meetup/events/289674210/

TigerLabs in Princeton on the 2nd floor, walk up and the door will be open. Same that we were using for the old Future of Data - Princeton events 2016-2019. Parking at the school is free. street parking nearby is free. there are meters on some streets and a few blocks away is a paid parking garage. We are joining forces with our friends Cloudera again on a FLiPN amazing journey into Real-Time Streaming Applications with Apache Flink, Apache NiFi, and Apache Pulsar. Discover how to stream data to and from your data lake or data mart using Apache Pulsar™ and Apache NiFi®. Learn how these cloud-native, scalable open-source projects built for streaming data pipelines work together to enable you to quickly build applications with minimal coding.

- Apache NiFi

- Apache Pulsar

- Apache Flink

- Flink SQL

We will show you how to build apps, so download beforehand to Docker, K8, your Laptop, or the cloud.

- https://youtu.be/s80sz3NWwHo

- https://docs.cloudera.com/csp-ce/latest/index.html

- https://www.cloudera.com/downloads/cdf/csp-community-edition.html

- Apache Pulsar https://pulsar.apache.org/docs/getting-started-standalone/

- StreamNative Cloud https://streamnative.io/free-cloud/

- Cloudera + Pulsar https://community.cloudera.com/t5/Cloudera-Stream-Processing-Forum/Using-Apache-Pulsar-with-SQL-Stream-Builder/m-p/349917

- https://community.cloudera.com/t5/Community-Articles/Using-Apache-NiFi-with-Apache-Pulsar-for-Streaming/ta-p/337891

|AGENDA|

- 6:00 - 6:30 PM EST: Food, Drink, and Networking!!!

- 6:30 - 7:15 PM EST: Presentation - Tim Spann, StreamNative Developer Advocate

- 7:15 - 8:00 PM EST: Presentation - John Kuchmek, Cloudera Principal Solutions Engineer

- 8:00 - 8:30 PM EST: Round Table on Real-Time Streaming

- 8:30 - 9:00 PM EST: Q&A + Networking

|ABOUT THE SPEAKERS|

John Kuchmek is a Principal Solutions Engineer for Cloudera. Before joining Cloudera, John transitioned to the Autonomous Intelligence team where he was in charge of integrating the platforms to allow data scientists to work with various types of data.

Tim Spann is a Developer Advocate for StreamNative. He works with StreamNative Cloud, Apache Pulsar™, Apache Flink®, Flink® SQL, Big Data, the IoT, machine learning, and deep learning. Tim has over a decade of experience with the IoT, big data, distributed computing, messaging, streaming technologies, and Java programming.

See:

December 5, 2022: FLiP Stack Weekly

December 5, 2022

FLiP Stack Weekly

A few good talks and some cool stuff for the rest of the year.

Check out our channel:

https://www.youtube.com/@streamnativecommunity8124/featured

New Stuff

https://cwiki.apache.org/confluence/display/NIFI/Release+Notes#ReleaseNotes-Version1.19.0

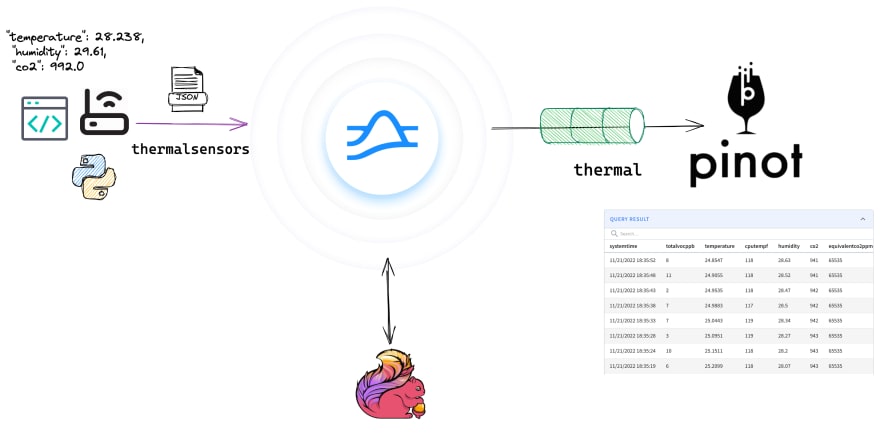

A quick preview of Apache Pulsar + Apache Pinot.

type="application/x-shockwave-flash"

wmode="transparent" width="425" height="350" />

https://github.com/tspannhw/pulsar-thermal-pinot

PODCAST

Take a look at recent podcasts in audio or video format.

https://www.youtube.com/watch?v=U8aPBhlvDHU&feature=emb_imp_woyt

CODE + COMMUNITY

Join my meetup group NJ/NYC/Philly/Virtual. We will have a hybrid event on December 8th.

https://www.meetup.com/new-york-city-apache-pulsar-meetup/

This is Issue #61!!

https://github.com/tspannhw/FLiPStackWeekly

https://www.linkedin.com/pulse/2022-schedule-tim-spann

VIDEOS

type="application/x-shockwave-flash"

wmode="transparent" width="425" height="350" />

https://www.youtube.com/embed/yU3UVhLz1Io

type="application/x-shockwave-flash"

wmode="transparent" width="425" height="350" />

https://www.youtube.com/watch?v=346PAVtrJNE

type="application/x-shockwave-flash"

wmode="transparent" width="425" height="350" />

https://www.youtube.com/watch?v=C684XAivnqg

type="application/x-shockwave-flash"

wmode="transparent" width="425" height="350" />

https://www.youtube.com/watch?v=mAKAP71JfQs

type="application/x-shockwave-flash"

wmode="transparent" width="425" height="350" />

ARTICLES

Cool award winners and friends: Apache Pulsar, Apache Iceberg, Starburst, Cloudera Data Platform, Apache Flink, Nvidia Jetson AGX Orin, Delta Lake, Databricks, Redis.

https://streamnative.io/blog/community/2022-12-01-pulsar-summit-asia-2022-recap/

https://betterprogramming.pub/going-native-with-spring-boot-3-ga-4e8d91ab21d3

https://github.com/riferrei/devrel-mastodon

https://www.ververica.com/blog/the-release-of-flink-cdc-2.3

https://inlong.apache.org/docs/quick_start/pulsar_example

TALKS

https://www.meetup.com/real-time-analytics-meetup-ny/events/290037437/

TRAINING

Dates are Dec 5 - Dec 8, 2022

Link to register: https://www.eventbrite.com/e/463731161387

Dates are January 17 - 19, 2023 from 2pm - 5pm CET / 8am - 12pm EST

Link to register: https://www.eventbrite.com/e/465055021087

https://streamnative.io/training/

CODE

https://github.com/timeplus-io/pulsar-io-sink

https://github.com/spring-projects/spring-aot-smoke-tests/tree/main/integration/spring-pulsar

https://github.com/streamnative/psat_exercise_code

EVENTS

Dec 8, 2022: Open Source Summit Finance NYC

https://events.linuxfoundation.org/open-source-finance-forum-new-york/

Dec 14, 2022: Manhattan, NYC: Pulsar + Pinot Meetup

https://www.meetup.com/new-york-city-apache-pulsar-meetup/events/289817171/

Dec 15, 2022: TigerLabs, Princeton, NJ: Pulsar + NiFi + Flink Meetup

https://www.meetup.com/new-york-city-apache-pulsar-meetup/events/289674210/

Data Science Camp Online

Machine Intelligence Guild

HTAP Summit

Coming soon.

Pinot / Pulsar Meetup

Coming soon.

CockroachDB NYC Meetup

Hazelcast Event

https://docs.hazelcast.com/hazelcast/5.1/integrate/pulsar-connector

TOOLS

https://catalog.redhat.com/software/operators/detail/62e3a42fb05a68e9d3fd21f4

https://www.pcjs.org/software/pcx86/app/ibm/basic/1.00/donkey/

https://github.com/ArmDeveloperEcosystem/ml-image-classification-example-for-openmv

https://github.com/streamnative/pulsar-recipes/tree/main/rpc

https://github.com/streamnative/pulsar-recipes/tree/main/long-running-tasks

TIPS

pip3.9 install 'pulsar-client[all]'

Google Sheet Hack! Import Any RSS Feed to a Sheet

=ImportFeed("http://example.com/feed")

`

JOBS

https://jobs.lever.co/stream-native/74d75fc1-1ad7-40a0-b907-66d8ac86009a