Creating An Email Bot in Apache NiFi (Consume and Send Email)

Creating An Email Bot in Apache NiFi

Some people say I must have a bot to read and reply to email at all crazy hours of the day. An awesome email assistant, well I decided to prototype it.

This is the first piece. After this I will add some Spark machine learning to intelligently reply to emails from a list of pretrained responses. With supervised learning it will learn what emails to send to who, based on Subject, From, Body Content, attachments, time of day, sender domain and many other variables.

For now, it just reads some emails and checks for a hard coded subject.

I could use this to trigger other processes, such as running a batch Spark job.

Since most people send and use HTML email (that's what Outlook, Outlook.com, Gmail do), I will send and receive HTML emails as to make it look more legit.

I could also run my fortune script and return that as my email content. Making me sound wise, or pull in a random selection of tweets about Hadoop or even recent news. Making the email very current and fresh.

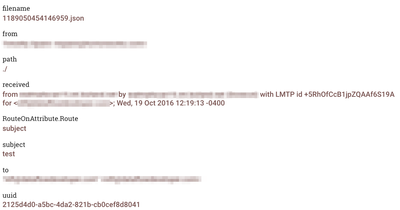

Snippet Example of a Mixed Content Email Message (Attachments Removed to Save Space)

Return-Path: <x@example.com>

Delivered-To: nifi@example.com

Received: from x.x.net

by x.x.net (Dovecot) with LMTP id +5RhOfCcB1jpZQAAf6S19A

for <nifi@example.com>; Wed, 19 Oct 2016 12:19:13 -0400

Return-path: <x@example.com>

Envelope-to: nifi@example.com

Delivery-date: Wed, 19 Oct 2016 12:19:13 -0400

Received: from [x.x.x.x] (helo=smtp.example.com)

by x.example.com with esmtp (Exim)

id 1bwtaC-0006dd-VQ

for nifi@example.com; Wed, 19 Oct 2016 12:19:12 -0400

Received: from x.x.net ([x.x.x.x])

by x with bizsmtp

id xUKB1t0063zlEh401UKCnK; Wed, 19 Oct 2016 12:19:12 -0400

X-EN-OrigIP: 64.78.52.185

X-EN-IMPSID: xUKB1t0063zlEh401UKCnK

Received: from x.x.net (localhost [127.0.0.1])

(using TLSv1 with cipher AES256-SHA (256/256 bits))

(No client certificate requested)

by emg-ca-1-1.localdomain (Postfix) with ESMTPS id BEE9453F81

for <nifi@example.com>; Wed, 19 Oct 2016 09:19:10 -0700 (PDT)

Subject: test

MIME-Version: 1.0

x-echoworx-msg-id: e50ca00a-edc5-4030-a127-f5474adf4802

x-echoworx-emg-received: Wed, 19 Oct 2016 09:19:10.713 -0700

x-echoworx-message-code-hashed: 5841d9083d16bded28a3c4d33bc505206b431f7f383f0eb3dbf1bd1917f763e8

x-echoworx-action: delivered

Received: from 10.254.155.15 ([10.254.155.15])

by emg-ca-1-1 (JAMES SMTP Server 2.3.2) with SMTP ID 503

for <nifi@example.com>;

Wed, 19 Oct 2016 09:19:10 -0700 (PDT)

Received: from x.x.net (unknown [x.x.x.x])

(using TLSv1 with cipher AES256-SHA (256/256 bits))

(No client certificate requested)

by emg-ca-1-1.localdomain (Postfix) with ESMTPS id 6693053F86

for <nifi@example.com>; Wed, 19 Oct 2016 09:19:10 -0700 (PDT)

Received: from x.x.net (x.x.x.x) by

x.x.net (x.x.x.x) with Microsoft SMTP

Server (TLS) id 15.0.1178.4; Wed, 19 Oct 2016 09:19:09 -0700

Received: from x.x.x.net ([x.x.x.x]) by

x.x.x.net ([x.x.x.x]) with mapi id

15.00.1178.000; Wed, 19 Oct 2016 09:19:09 -0700

From: x x<x@example.com>

To: "nifi@example.com" <nifi@example.com>

Thread-Topic: test

Thread-Index: AQHSKiSFTVqN9ugyLEirSGxkMiBNFg==

Date: Wed, 19 Oct 2016 16:19:09 +0000

Message-ID: <D49AD137-3765-4F9A-BF98-C4E36D11FFD8@hortonworks.com>

Accept-Language: en-US

Content-Language: en-US

X-MS-Has-Attach: yes

X-MS-TNEF-Correlator:

x-ms-exchange-messagesentrepresentingtype: 1

x-ms-exchange-transport-fromentityheader: Hosted

x-originating-ip: [71.168.178.39]

x-source-routing-agent: Processed

Content-Type: multipart/related;

boundary="_004_D49AD13737654F9ABF98C4E36D11FFD8hortonworkscom_";

type="multipart/alternative"

--_004_D49AD13737654F9ABF98C4E36D11FFD8hortonworkscom_

Content-Type: multipart/alternative;

boundary="_000_D49AD13737654F9ABF98C4E36D11FFD8hortonworkscom_"

--_000_D49AD13737654F9ABF98C4E36D11FFD8hortonworkscom_

Content-Type: text/plain; charset="utf-8"

Content-Transfer-Encoding: base64

Python Script to Parse Email Messages

#!/usr/bin/env python

"""Unpack a MIME message into a directory of files."""

import json

import os

import sys

import email

import errno

import mimetypes

from optparse import OptionParser

from email.parser import Parser

def main():

parser = OptionParser(usage="""Unpack a MIME message into a directory of files.

Usage: %prog [options] msgfile

""")

parser.add_option('-d', '--directory',

type='string', action='store',

help="""Unpack the MIME message into the named

directory, which will be created if it doesn't already

exist.""")

opts, args = parser.parse_args()

if not opts.directory:

os.makedirs(opts.directory)

try:

os.mkdir(opts.directory)

except OSError as e:

# Ignore directory exists error

if e.errno != errno.EEXIST:

raise

msgstring = ''.join(str(x) for x in sys.stdin.readlines())

msg = email.message_from_string(msgstring)

headers = Parser().parsestr(msgstring)

response = {'To': headers['to'], 'From': headers['from'], 'Subject': headers['subject'], 'Received': headers['Received']}

print json.dumps(response)

counter = 1

for part in msg.walk():

# multipart/* are just containers

if part.get_content_maintype() == 'multipart':

continue

# Applications should really sanitize the given filename so that an

# email message can't be used to overwrite important files

filename = part.get_filename()

if not filename:

ext = mimetypes.guess_extension(part.get_content_type())

if not ext:

# Use a generic bag-of-bits extension

ext = '.bin'

filename = 'part-%03d%s' % (counter, ext)

counter += 1

fp = open(os.path.join(opts.directory, filename), 'wb')

fp.write(part.get_payload(decode=True))

fp.close()

if __name__ == '__main__':

main()

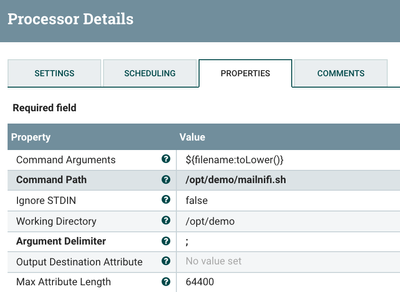

mailnifi.sh

python mailnifi.py -d /opt/demo/email/"$@"

Python needs the email component for parsing the message, you can install via PIP.

pip install email

I am using Python 2.7, you could use a newer Python 3.x

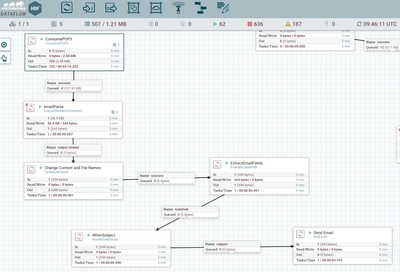

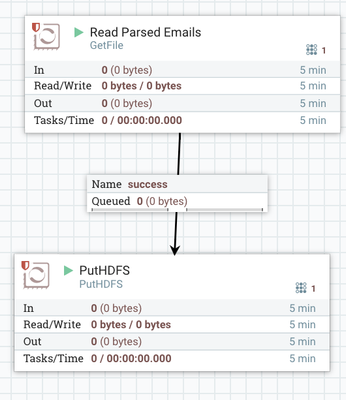

Here is the flow:

For the final part of the flow, I read the files created by the parsing, load them to HDFS and delete from the file system using the standard GetFile.

Reference:

Files:

email-assistant-12-jan-2017.xml